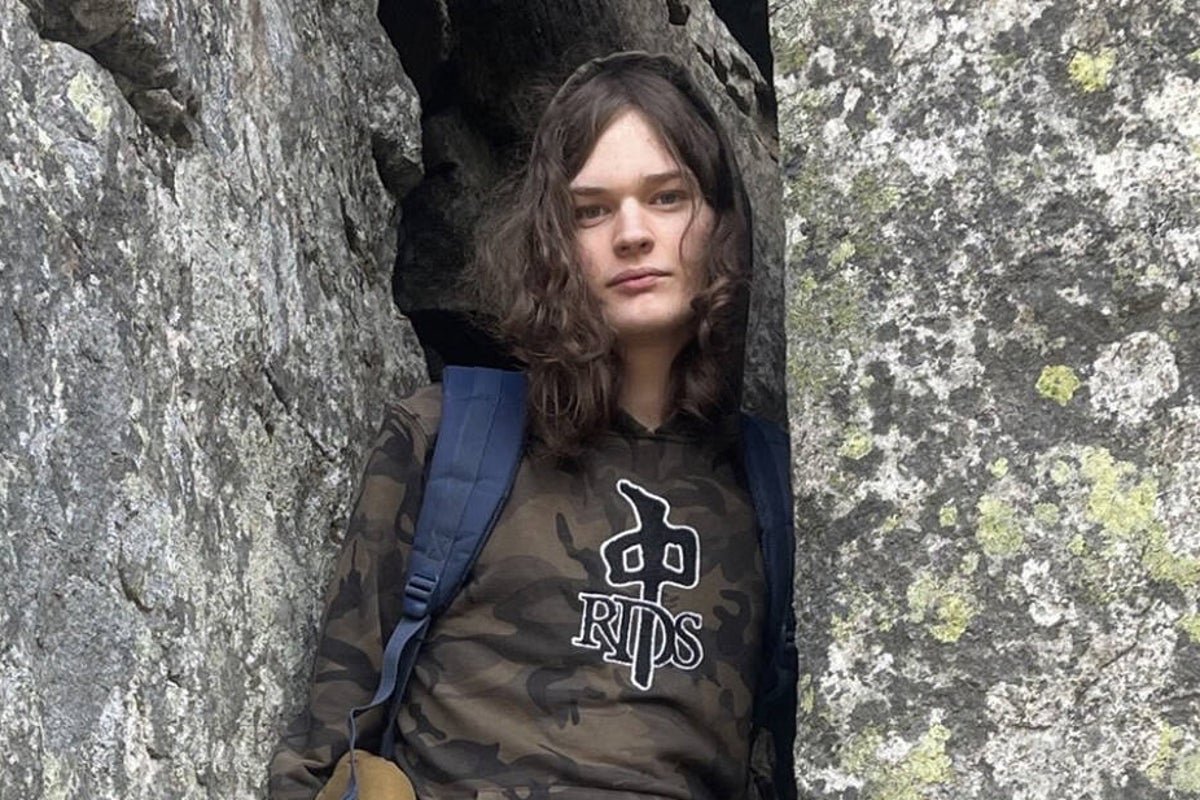

OpenAI Employees Raised Alarm About Mass Shooting Suspect Months Ago

Mass Shooting Suspect: OpenAI Employees Raised Alarm Months Ago

Recent reports have revealed that employees at OpenAI raised concerns about a mass shooting suspect months prior to the incident. This shocking revelation has sparked a heated debate about the role of artificial intelligence in identifying and preventing violent behaviour. The suspect, who has not been named, was reportedly flagged by OpenAI employees due to their online activity. The employees’ concerns were allegedly raised in an internal report, which highlighted the suspect’s disturbing behaviour.

The incident has raised questions about the effectiveness of AI-powered monitoring systems in detecting and preventing violent crimes. While AI can analyse vast amounts of data, it is not a foolproof solution and requires human oversight to identify potential threats. The case has also sparked a discussion about the importance of responsible AI development and the need for transparency in AI-powered decision-making processes.

Experts argue that AI can be a valuable tool in identifying potential threats, but it is crucial to strike a balance between AI-powered monitoring and human judgment. The use of AI in law enforcement and national security has been increasing in recent years, with many agencies relying on AI-powered systems to analyse data and identify potential threats. However, the use of AI in these contexts also raises concerns about bias, accountability, and transparency.

The incident has also highlighted the need for greater collaboration between tech companies, law enforcement agencies, and policymakers to develop effective strategies for preventing violent crimes. By working together, these stakeholders can share knowledge, best practices, and resources to develop more effective solutions for identifying and preventing violent behaviour. This collaboration can also help to address the complex social and economic factors that contribute to violent crime.

The role of social media companies in monitoring and reporting suspicious activity has also come under scrutiny. While social media companies have made efforts to improve their content moderation policies, more needs to be done to address the spread of hate speech and violent content online. The incident has also sparked a discussion about the importance of digital literacy and online safety, particularly among young people.

In conclusion, the incident has highlighted the complex challenges of identifying and preventing violent crimes in the digital age. While AI can be a valuable tool in this effort, it is crucial to strike a balance between AI-powered monitoring and human judgment. By working together, stakeholders can develop more effective solutions for preventing violent behaviour and promoting online safety.

The use of AI in law enforcement and national security is a complex issue that requires careful consideration of the potential benefits and risks. As the use of AI in these contexts continues to grow, it is essential to prioritize transparency, accountability, and human oversight to ensure that AI-powered systems are used responsibly and effectively. By doing so, we can harness the potential of AI to improve public safety while also protecting human rights and civil liberties.

Furthermore, the incident has highlighted the need for greater investment in community-based programmes that address the root causes of violent crime. By providing support and resources to vulnerable individuals and communities, we can help to prevent violent behaviour and promote social cohesion. This approach requires a long-term commitment to addressing the complex social and economic factors that contribute to violent crime.

Ultimately, the prevention of violent crimes requires a multifaceted approach that combines the use of AI-powered monitoring systems with human judgment, community-based programmes, and social media regulation. By working together, we can develop more effective solutions for identifying and preventing violent behaviour, and promoting a safer and more just society for all.